Difference between revisions of "stoney conductor: VM Backup"

(→Basic idea) |

(→Communication through backend) |

||

| (78 intermediate revisions by 3 users not shown) | |||

| Line 1: | Line 1: | ||

= Overview = | = Overview = | ||

This page describes how the VMs and VM-Templates are backed-up and restored inside the [http://www.stoney-cloud.org stoney cloud]. | This page describes how the VMs and VM-Templates are backed-up and restored inside the [http://www.stoney-cloud.org stoney cloud]. | ||

| + | |||

| + | = Requirements = | ||

| + | * sstBackupRootDirectory: file:///var/backup/virtualization | ||

| + | ** This directory might be a single partition which needs to have the same size as your partition for the live images (it's a "copy" of the live partition) | ||

| + | * sstBackupRetainDirectory: file:///var/virtualization/retain | ||

| + | ** This directory must be on the same partition as your life images are | ||

| + | * A working stoney cloud, installed according to [[stoney cloud: Single-Node Installation]] or [[stoney cloud: Multi-Node Installation]]. | ||

| + | * The backup configuration must be set: [[stoney_conductor:_OpenLDAP_directory_data_organisation#Backup | stoney conductor: OpenLDAP directory data organisation]]. | ||

= Backup = | = Backup = | ||

== Basic idea == | == Basic idea == | ||

The main idea to backup a VM or a VM-Template is, to divide the task into three subtasks: | The main idea to backup a VM or a VM-Template is, to divide the task into three subtasks: | ||

| − | * | + | * createSnapshot: Create a disk only snapshot. A new overlay file is created, all write operations are performed to this file. The underlying disk-image is now read only. |

| − | * | + | * exportSnapshot: Copy the read only disk-image to the backup location. |

| − | * | + | * commitSnapshot: Commit the performed write operations from the overlay back to the underlying (original) disk image. Now the underlying image is read-write again and the overlay image can be deleted. |

A more detailed and technical description for these three sub-processes can be found [[#Sub-Processes | here]]. | A more detailed and technical description for these three sub-processes can be found [[#Sub-Processes | here]]. | ||

Furthermore there is an control instance, which can independently call these three sub-processes for a given machine. Like that, the stoney cloud is able to handle different cases: | Furthermore there is an control instance, which can independently call these three sub-processes for a given machine. Like that, the stoney cloud is able to handle different cases: | ||

=== Backup a single machine === | === Backup a single machine === | ||

| − | The procedure for backing up a single machine is very simple. Just call the three sub-processes ( | + | The procedure for backing up a single machine is very simple. Just call the three sub-processes (create-, export- and commitSnapshot) one after the other. So the control instance would do some very basic stuff: |

<source lang="c"> | <source lang="c"> | ||

object machine = args[0]; | object machine = args[0]; | ||

| − | if( | + | if( createSsnapshot( machine ) ) |

{ | { | ||

| − | if ( | + | if ( exportSnapshot( machine ) ) |

{ | { | ||

| − | if ( | + | if ( commitSnapshot( machine ) ) |

{ | { | ||

printf("Successfully backed up machine %s\n", machine); | printf("Successfully backed up machine %s\n", machine); | ||

| Line 28: | Line 36: | ||

} else | } else | ||

{ | { | ||

| − | printf("Error while | + | printf("Error while committing snapshot for machine %s: %s\n", machine, error); |

} | } | ||

} else | } else | ||

{ | { | ||

| − | printf("Error while | + | printf("Error while exporting snapshot for machine %s: %s\n", machine, error); |

} | } | ||

| Line 43: | Line 51: | ||

=== Backup multiple machines at the same time === | === Backup multiple machines at the same time === | ||

| − | When backing up multiple machines at the same time, we need to make sure that the | + | When backing up multiple machines at the same time, we need to make sure that the snapshots for the machines are as close together as possible. Therefore the control instance should call first the createSnapshot process for all machines. After every machine has been snapshotted, the control instance can call the exportSnapshot and commitSnapshot process for every machine. The most important part here is, that the control instance somehow remembers, if the snapshot for a given machine was successful or not. Because if the snapshot failed, it must not call the exportSnapshot and commitSnapshot process. So the control instance needs a little bit more logic: |

<source lang="c"> | <source lang="c"> | ||

| Line 54: | Line 62: | ||

# If the snapshot was successful, put the machine into the | # If the snapshot was successful, put the machine into the | ||

# successful_snapshots array | # successful_snapshots array | ||

| − | if ( | + | if ( createSnapshot( machines[i] ) ) |

{ | { | ||

successful_snapshots[machines[i]]; | successful_snapshots[machines[i]]; | ||

| Line 63: | Line 71: | ||

} | } | ||

| − | # | + | # export and commit all successful_snapshot machines |

for ( int i = 0; i < sizeof(successful_snapshots) / sizeof(object) ; i++ ) ) | for ( int i = 0; i < sizeof(successful_snapshots) / sizeof(object) ; i++ ) ) | ||

{ | { | ||

| Line 70: | Line 78: | ||

if ( successful_snapshots[i] ) | if ( successful_snapshots[i] ) | ||

{ | { | ||

| − | if ( | + | if ( exportSnapshot( successful_snapshots[i] ) ) |

{ | { | ||

| − | if ( | + | if ( commitSnapshot( successful_snapshots[i] ) ) |

{ | { | ||

printf("Successfully backed-up machine %s\n", successful_snapshots[i]); | printf("Successfully backed-up machine %s\n", successful_snapshots[i]); | ||

} else | } else | ||

{ | { | ||

| − | printf("Error while | + | printf("Error while committing snapshot for machine %s: %s\n", successful_snapshots[i],error); |

} | } | ||

} else | } else | ||

{ | { | ||

| − | printf("Error while | + | printf("Error while exporting snapshot for machine %s: %s\n", successful_snapshots[i],error); |

} | } | ||

} | } | ||

| Line 89: | Line 97: | ||

=== Sub-Processes === | === Sub-Processes === | ||

| − | + | See also [[Libvirt_external_snapshot_with_GlusterFS]] | |

| − | + | ==== createSnapshot ==== | |

| − | + | For the commands see [[Libvirt_external_snapshot_with_GlusterFS#Part_2:_Create_the_snapshot_using_virsh]] | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | # | + | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| + | For the workflow see [[stoney_conductor:_prov-backup-kvm#createSnapshot]] | ||

| + | ==== exportSnapshot ==== | ||

| + | # Simply copy the underlying image to the backup location | ||

| + | #* <source lang="bash">cp -p /<path>/<to>/<image>.qcow2 /<path>/<to>/<backup>/<location>/.</source> | ||

| − | + | For the workflow see [[stoney_conductor:_prov-backup-kvm#exportSnapshot]] | |

| − | ==== | + | ==== commitSnapshot ==== |

| − | + | For the commands see [[Libvirt_external_snapshot_with_GlusterFS#Cleanup.2FCommit_.28Online.29]] | |

| − | # | + | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | For the workflow see [[stoney_conductor:_prov-backup-kvm#commitSnapshot]] | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | # | + | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

== Communication through backend == | == Communication through backend == | ||

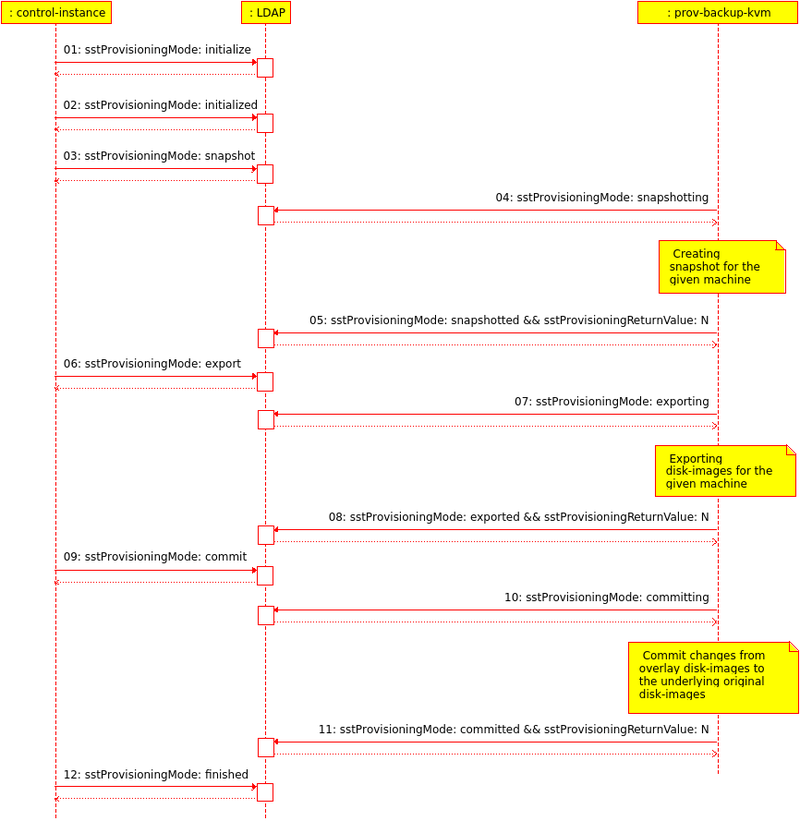

Since the stoney cloud is (as the name says already) a cloud solution, it makes sense to have a backend (in our case openLDAP) involved in the whole process. Like that it is possible to run the backup jobs decentralized on every vm-node. The control instance can then modify the backend, and theses changes are seen by the diffenrent backup daemons on the vm-nodes. So the communication could look like shown in the following picture (Figure 1): | Since the stoney cloud is (as the name says already) a cloud solution, it makes sense to have a backend (in our case openLDAP) involved in the whole process. Like that it is possible to run the backup jobs decentralized on every vm-node. The control instance can then modify the backend, and theses changes are seen by the diffenrent backup daemons on the vm-nodes. So the communication could look like shown in the following picture (Figure 1): | ||

| − | [[File:Daemon-communication.png| | + | [[File:Daemon-communication.png|800px|thumbnail|none|Figure 1: Communication between the control instance and the prov-backup-kvm daemon through the LDAP backend]] |

| + | |||

| + | You can modify/update this workflow by editing [[File:Daemon-communication.xmi]] (you may need [http://uml.sourceforge.net/ Umbrello UML Modeller] diagram programme for KDE to display the content properly). | ||

=== Control-Instance Daemon Interaction for creating a Backup with LDIF Examples === | === Control-Instance Daemon Interaction for creating a Backup with LDIF Examples === | ||

| Line 274: | Line 238: | ||

</pre> | </pre> | ||

| − | ==== Step 06: Start the | + | ==== Step 06: Start the export Process (Control instance daemon) ==== |

| − | With the setting of the '''sstProvisioningMode''' to ''' | + | With the setting of the '''sstProvisioningMode''' to '''export''', the Control instance daemon tells the Provisioning-Backup-KVM daemon to export the disk image to the backup location. |

<pre> | <pre> | ||

# The attribute sstProvisioningState is set to zero by the fc-brokerd, when sstProvisioningMode is modified to | # The attribute sstProvisioningState is set to zero by the fc-brokerd, when sstProvisioningMode is modified to | ||

| − | # | + | # export (this way the Provisioning-Backup-VKM daemon knows, that it must start the export process). |

dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | ||

changetype: modify | changetype: modify | ||

| Line 285: | Line 249: | ||

- | - | ||

replace: sstProvisioningMode | replace: sstProvisioningMode | ||

| − | sstProvisioningMode: | + | sstProvisioningMode: export |

</pre> | </pre> | ||

| − | ==== Step 07: Starting the | + | ==== Step 07: Starting the export Process (Provisioning-Backup-KVM daemon) ==== |

| − | As soon as the Provisioning-Backup-KVM daemon receives the | + | As soon as the Provisioning-Backup-KVM daemon receives the export command, it sets the '''sstProvisioningMode''' to '''exporting''' to tell the Control instance daemon and other interested parties, that it is exporting the virtual machine or virtual machine template disk images. |

<pre> | <pre> | ||

| − | # The attribute sstProvisioningMode is set to | + | # The attribute sstProvisioningMode is set to exporting by the Provisioning-Backup-VKM daemon. |

dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | ||

changetype: modify | changetype: modify | ||

replace: sstProvisioningMode | replace: sstProvisioningMode | ||

| − | sstProvisioningMode: | + | sstProvisioningMode: exporting |

</pre> | </pre> | ||

| − | ==== Step 08: Finalizing the | + | ==== Step 08: Finalizing the export Process (Provisioning-Backup-KVM daemon) ==== |

| − | As soon as the Provisioning-Backup-KVM daemon has executed the | + | As soon as the Provisioning-Backup-KVM daemon has executed the export command, it sets the '''sstProvisioningMode''' to '''exported''', the '''sstProvisioningState''' to the current timestamp (UTC) and '''sstProvisioningReturnValue''' to zero to tell the Control instance daemon and other interested parties, that the export of the virtual machine or virtual machine template disk-images is finished. |

<pre> | <pre> | ||

# The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when | # The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when | ||

| Line 313: | Line 277: | ||

- | - | ||

replace: sstProvisioningMode | replace: sstProvisioningMode | ||

| − | sstProvisioningMode: | + | sstProvisioningMode: exported |

</pre> | </pre> | ||

| − | ==== Step 09: Start the | + | ==== Step 09: Start the commit Process (Control instance daemon) ==== |

| − | With the setting of the '''sstProvisioningMode''' to ''' | + | With the setting of the '''sstProvisioningMode''' to '''commit''', the Control instance daemon tells the Provisioning-Backup-KVM daemon to commit the changes from the overlay file to the underlying disk-image |

<pre> | <pre> | ||

# The attribute sstProvisioningState is set to zero by the fc-brokerd, when sstProvisioningMode is modified to | # The attribute sstProvisioningState is set to zero by the fc-brokerd, when sstProvisioningMode is modified to | ||

| − | # | + | # commit (this way the Provisioning-Backup-VKM daemon knows, that it must start the commit process). |

dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | ||

changetype: modify | changetype: modify | ||

| Line 327: | Line 291: | ||

- | - | ||

replace: sstProvisioningMode | replace: sstProvisioningMode | ||

| − | sstProvisioningMode: | + | sstProvisioningMode: commit |

</pre> | </pre> | ||

| − | ==== Step 10: Starting the | + | ==== Step 10: Starting the commit Process (Provisioning-Backup-KVM daemon) ==== |

| − | As soon as the Provisioning-Backup-KVM daemon receives the | + | As soon as the Provisioning-Backup-KVM daemon receives the commit command, it sets the '''sstProvisioningMode''' to '''comitting''' to tell the Control instance daemon and other interested parties, that it is committing changes from the overlay disk-images back to the underlying ones. |

<pre> | <pre> | ||

| − | # The attribute sstProvisioningMode is set to | + | # The attribute sstProvisioningMode is set to comitting by the Provisioning-Backup-VKM daemon. |

dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | ||

changetype: modify | changetype: modify | ||

replace: sstProvisioningMode | replace: sstProvisioningMode | ||

| − | sstProvisioningMode: | + | sstProvisioningMode: committing |

</pre> | </pre> | ||

| − | ==== Step 11: Finalizing the | + | ==== Step 11: Finalizing the commit Process (Provisioning-Backup-KVM daemon) ==== |

| − | As soon as the Provisioning-Backup-KVM daemon has executed the | + | As soon as the Provisioning-Backup-KVM daemon has executed the commit command, it sets the '''sstProvisioningMode''' to '''comitted''', the '''sstProvisioningState''' to the current timestamp (UTC) and '''sstProvisioningReturnValue''' to zero to tell the Control instance daemon and other interested parties, that the comitting of the changes from the overlay disk-images back to the underlying ones is done. |

<pre> | <pre> | ||

# The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when | # The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when | ||

| Line 355: | Line 319: | ||

- | - | ||

replace: sstProvisioningMode | replace: sstProvisioningMode | ||

| − | sstProvisioningMode: | + | sstProvisioningMode: comitted |

</pre> | </pre> | ||

==== Step 12: Finalizing the Backup Process (Control instance daemon) ==== | ==== Step 12: Finalizing the Backup Process (Control instance daemon) ==== | ||

| − | As soon as the Control instance daemon notices, that the attribute '''sstProvisioningMode''' ist set to ''' | + | As soon as the Control instance daemon notices, that the attribute '''sstProvisioningMode''' ist set to '''committed''', it sets the '''sstProvisioningMode''' to '''finished''' and the '''sstProvisioningState''' to the current timestamp (UTC). All interested parties now know, that the backup process is finished, there for a new backup process could be started. |

<pre> | <pre> | ||

# The attribute sstProvisioningState is updated with current time by the fc-brokerd, when sstProvisioningMode is | # The attribute sstProvisioningState is updated with current time by the fc-brokerd, when sstProvisioningMode is | ||

| Line 373: | Line 337: | ||

</pre> | </pre> | ||

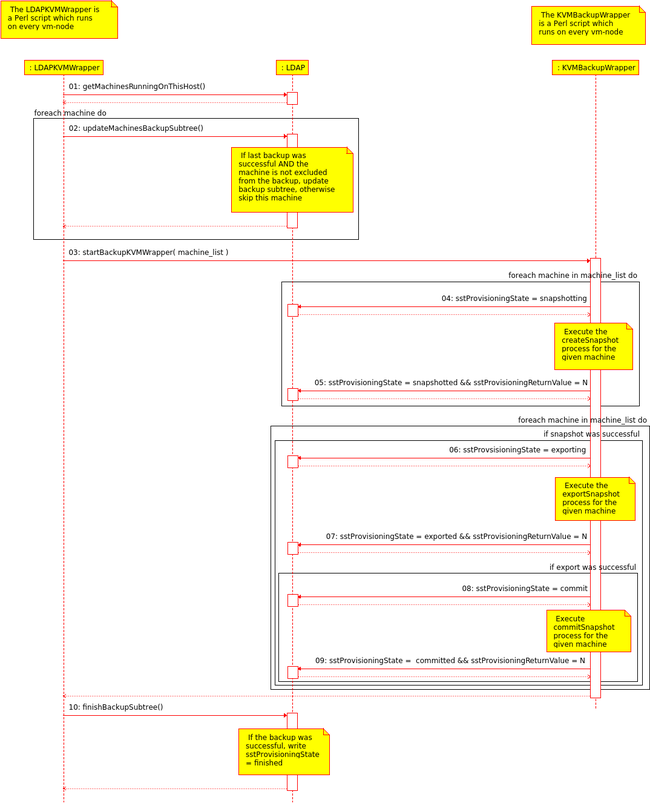

| − | == | + | == Current Implementation (Backup) == |

Since we do not have a working control instance, we need to have a workaround for backing up the machines: | Since we do not have a working control instance, we need to have a workaround for backing up the machines: | ||

* We do already have a BackupKVMWrapper.pl script (File-Backend) which executes the three [[#Sub-Processes | sub-processes ]] in the correct order for a given list of machines (see [[#Backup multiple machines at the same_time]]). | * We do already have a BackupKVMWrapper.pl script (File-Backend) which executes the three [[#Sub-Processes | sub-processes ]] in the correct order for a given list of machines (see [[#Backup multiple machines at the same_time]]). | ||

* We do already have the implementation for the whole backup with the LDAP-Backend (see [[ stoney conductor: prov backup kvm ]]). | * We do already have the implementation for the whole backup with the LDAP-Backend (see [[ stoney conductor: prov backup kvm ]]). | ||

| − | * We can now combine these two existing scripts and create a wrapper (lets call it | + | * We can now combine these two existing scripts and create a wrapper (lets call it LDAPKVMWrapper) which, in some way, adds some logic to the BackupKVMWrapper.pl. In fact the LDAPKVMWrapper wrapper will generate the list of machines which need a backup. |

The behaviour on our servers is as follows (c.f. Figure 2): | The behaviour on our servers is as follows (c.f. Figure 2): | ||

| − | # The (decentralized) | + | # The (decentralized) LDAPKVMWrapper wrapper (which is executed everyday via cronjob) generates a list off all machines running on the current host. |

| + | #* Currently on the hosts the cronjobs looks like: <code>00 01 * * * /usr/bin/LDAPKVMWrapper.pl | logger -t Backup-KVM</code> | ||

#* For each of these machines: | #* For each of these machines: | ||

#** Check if the machine is excluded from the backup, if yes, remove the machine from the list | #** Check if the machine is excluded from the backup, if yes, remove the machine from the list | ||

| Line 388: | Line 353: | ||

#* Remove the old backup leaf (the "yesterday-leaf"), and add a new one (the "today-leaf") | #* Remove the old backup leaf (the "yesterday-leaf"), and add a new one (the "today-leaf") | ||

#* After this step, the machines are ready to be backed up | #* After this step, the machines are ready to be backed up | ||

| − | # Call the | + | # Call the KVMBackupWrapper.pl script with the machines list as a parameter |

| − | # Wait for the | + | # Wait for the KVMBackupWrapper.pl script to finish |

# Go again through all machines and update the backup subtree a last time | # Go again through all machines and update the backup subtree a last time | ||

#* Check if the backup was successful, if yes, set sstProvisioningMode = finished (see also TBD) | #* Check if the backup was successful, if yes, set sstProvisioningMode = finished (see also TBD) | ||

| − | |||

| − | == Next steps == | + | [[File:wrapper-interaction.png|650px|thumbnail|none|Figure 2: How the two wrapper interact with the LDAP backend]] |

| + | |||

| + | You can modify/update this workflow by editing [[File:wrapper-interaction.xmi]] (you may need [http://uml.sourceforge.net/ Umbrello UML Modeller] diagram programme for KDE to display the content properly). | ||

| + | |||

| + | * If for some reason something does not work at all, the whole backup process can be deactivated by simply disabling the LDAPKVMWrapper cronjob | ||

| + | ** <code>crontab -e</code> | ||

| + | ** Comment the LDAPKVMWrapper cronjob line: <code>#00 01 * * * /usr/bin/LDAPKVMWrapper.pl | logger -t Backup-KVM</code> | ||

| + | === How to exclude a machine from the backup === | ||

| + | Login to one of the [[VM-Node | vm-nodes]] and execute the following command | ||

| + | |||

| + | If you want to exclude a machine from the backup run you simply need to add the following entry to your LDAP directory: | ||

| + | <source lang="bash"> | ||

| + | machineuuid="<UUID OF THE MACHINE-NAME>" # e.g.: b9d13dbc-9ab7-4948-9daa-a5709de83dc2 | ||

| + | cat << EOF | ldapadd -D cn=Manager,o=stepping-stone,c=ch -H ldaps://ldapm.stepping-stone.ch/ -W -x | ||

| + | dn: ou=backup,sstVirtualMachine=${machineuuid},ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | ||

| + | objectclass: top | ||

| + | objectclass: organizationalUnit | ||

| + | objectclass: sstVirtualizationBackupObjectClass | ||

| + | ou: backup | ||

| + | sstbackupexcludefrombackup: TRUE | ||

| + | EOF | ||

| + | </source> | ||

| + | |||

| + | If the backup subtree in the LDAP directory already exists, you need to add the sstbackupexcludefrombackup attribute: | ||

| + | <source lang="bash"> | ||

| + | machineuuid="<UUID OF THE MACHINE-NAME>" # e.g.: b9d13dbc-9ab7-4948-9daa-a5709de83dc2 | ||

| + | cat << EOF | ldapadd -D cn=Manager,o=stepping-stone,c=ch -H ldaps://ldapm.stepping-stone.ch/ -W -x | ||

| + | dn: ou=backup,sstVirtualMachine=${machineuuid},ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch | ||

| + | changetype: modify | ||

| + | add: objectClass | ||

| + | objectClass: sstVirtualizationBackupObjectClass | ||

| + | - | ||

| + | add: sstbackupexcludefrombackup | ||

| + | sstbackupexcludefrombackup: TRUE | ||

| + | EOF | ||

| + | </source> | ||

| + | |||

| + | ==== Re-include the machine to the backup ==== | ||

| + | If you want to re include a machine, simply delete the machines whole backup subtree. It will be recreated during the next backup run. | ||

| + | |||

| + | == Next steps == | ||

= Restore = | = Restore = | ||

| + | '''Attention:''' The restore process is not yet defined / nor implemented. The following documentation is about the old restore process. | ||

== Basic idea == | == Basic idea == | ||

The restore process, similar to the backup process, can be divided into three sub-processes: | The restore process, similar to the backup process, can be divided into three sub-processes: | ||

| Line 410: | Line 415: | ||

* Non-User-Interaction phase: The daemons communicate through the backend between each other and the restore process continues without further user input (c.f. [[#Communication_through_backend_2 | Communication through backend]]) | * Non-User-Interaction phase: The daemons communicate through the backend between each other and the restore process continues without further user input (c.f. [[#Communication_through_backend_2 | Communication through backend]]) | ||

| − | === | + | === Sub Processes === |

| − | + | ==== Unretain small files ==== | |

| + | This workflow assumes that the backup directory is on the same physical server as the retain directory (protocol is file://) | ||

| + | # Copy the backend-entry file from the backup directory to the retain directory: | ||

| + | #* <source lang="bash">cp -p /path/to/backup/vm-001.backend /path/to/retain/vm-001.backend</source> | ||

| + | # Copy the XML description from the from the backup directory to the retain directory: | ||

| + | #* <source lang="bash">cp -p /path/to/backup/vm-001.xml /path/to/retain/vm-001.xml</source> | ||

| + | # Compare the backend-entry file (the one in the retain directory) with the live-backend entry | ||

| + | #* Resolve all conflicts between these two backend entries | ||

| + | #** Modify the backend entry at the retain location accordingly | ||

| + | # Apply the same changes for the XML description at the retain location (backend entry and XML description need to be consistent). | ||

| + | |||

| + | ==== Unretain large files ==== | ||

| + | # Copy the state file from the backup directory to the retain directory: | ||

| + | #* <source lang="bash">cp -p /path/to/backup/vm-001.state /path/to/retain/vm-001.state</source> | ||

| + | # Copy the disk image(s) from the backup directory to the retain directory: | ||

| + | #* <source lang="bash">cp -p /path/to/backup/vm-001.qcow2 /path/to/retain/vm-001.qcow2</source> | ||

| + | #** '''Important:''' If a VM has more than just one disk image, repeat this step for every disk image | ||

| + | |||

| + | ==== Restore the VM ==== | ||

| + | # Shutdown the VM if it is running: | ||

| + | #* <source lang="bash">virsh shutdown vm-001</source> | ||

| + | # Undefine the VM if it is still defined: | ||

| + | #* <source lang="bash">virsh undefine vm-001</source> | ||

| + | # Overwrite the original disk image: | ||

| + | #* <source lang="bash">mv /path/to/retain/vm-001.qcow2 /path/to/images/vm-001.qcow2</source> | ||

| + | #** '''Important:''' If a VM has more than just one disk image, repeat this step for every disk image | ||

| + | # Restore the VMs backend entry: | ||

| + | #* Write the backend entry from the retain location (<code>/path/to/retain/vm-001.backend</code>) to the backend | ||

| + | # Overwrite the VMs XML description with the one from the retain location | ||

| + | #* <source lang="bash">cp -p /path/to/retain/vm-001.xml /path/to/xmls/vm-001.xml</source> | ||

| + | # Restore the VM from the state file with the corrected XML | ||

| + | #* <source lang="bash">virsh restore /path/to/retain/vm-001.state --xml /path/to/xmls/vm-001.xml</source> | ||

== Communication through backend == | == Communication through backend == | ||

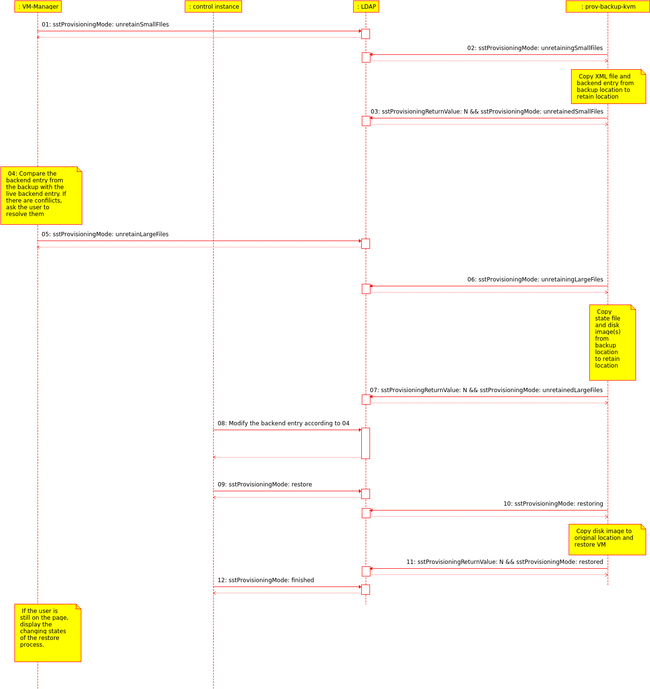

The actual KVM-Restore process is controlled completely by the Control instance daemon via the OpenLDAP directory. See [[#OpenLDAP Directory Integration|OpenLDAP Directory Integration]] the involved attributes and possible values. | The actual KVM-Restore process is controlled completely by the Control instance daemon via the OpenLDAP directory. See [[#OpenLDAP Directory Integration|OpenLDAP Directory Integration]] the involved attributes and possible values. | ||

| − | [[File:Daemon-interaction-restore.png|thumb| | + | [[File:Daemon-interaction-restore.png|thumb|650px|none|Figure 3: Communication between all involved parties during the restore process]] |

| − | You can modify/update these interactions by editing [[File:Restore-Interaction. | + | You can modify/update these interactions by editing [[File:Restore-Interaction.xmi]] (you may need [http://uml.sourceforge.net/ Umbrello UML Modeller] diagram programme for KDE to display the content properly). |

=== Control instance Daemon Interaction for restoring a Backup with LDIF Examples === | === Control instance Daemon Interaction for restoring a Backup with LDIF Examples === | ||

| Line 567: | Line 603: | ||

</pre> | </pre> | ||

| − | == | + | == Current Implementation (Restore) == |

| + | '''Attention''': The restore process is not yet defined / nor implemented. The following documentation is about the old restore process. | ||

| + | |||

| + | |||

| + | * Since the prov-backup-kvm daemon is not running on the vm-nodes (c.f. [[stoney_conductor:_Backup#Current_Implementation_.28Backup.29]]), the restore process does not work when clicking the icon in the webinterface. | ||

| + | |||

| + | === How to manually restore a machine from backup === | ||

| + | '''Important''': Before you continue with this guide, make sure that you have no other possibility to restore the machine. It might be easier and safer to get lost files from the online backup if the machine has one set up. | ||

| + | |||

| + | If you really have to restore the machine from the backup: | ||

| + | # Stop the machine from via the [https://cloud.stepping-stone.ch/vm-manager/ web interface] | ||

| + | # Login (as root) on the [[VM-Node]] the machine was running on | ||

| + | |||

| + | As a first step, you would like to set some useful bash variables to be able to copy paste the following guide: | ||

| + | |||

| + | '''Double check all variables you are setting here. If one is not correct, you will restore a running machine or overwrite a live-disk image!''' | ||

| + | <source lang='bash'> | ||

| + | machinename="<MACHINE-NAME>" # For example: machinename="b6dc3d27-5981-4b18-8f3f-31ed3d21a3c6" | ||

| + | vmpool="<VM-POOL>" # For example vmpool="0f83f084-8080-413e-b558-b678e504836e" | ||

| + | vmtype="<VM-TYPE>" # For example vmtype="vm-persistent" | ||

| + | </source> | ||

| + | Change to the backup directory for the given machine and check the iterations: | ||

| + | <source lang='bash'> | ||

| + | cd /var/backup/virtualization/${vmtype}/${vmpool}/${machinename} | ||

| + | ls -al | ||

| + | </source> | ||

| + | Change into the most recent iteration | ||

| + | <source lang='bash'> | ||

| + | cd 2014... | ||

| + | ls -al | ||

| + | </source> | ||

| + | In there you should have: | ||

| + | * The state file <MACHINE-NAME>.state.<BACKUP-DATE> (for example b6dc3d27-5981-4b18-8f3f-31ed3d21a3c6.state.20140109T134445Z) | ||

| + | * The XML description <MACHINE-NAME>.xml.<BACKUP-DATE> (for example b6dc3d27-5981-4b18-8f3f-31ed3d21a3c6.xml.20140109T134445Z) | ||

| + | * The ldif file <MACHINE-NAME>.ldif.<BACKUP-DATE> (for example b6dc3d27-5981-4b18-8f3f-31ed3d21a3c6.ldif.20140109T134445Z) | ||

| + | * And at least one disk image <DISK-IMAGE>.qcow2.<BACKUP-DATE> (for example 8798561b-d5de-471b-a6fc-ec2b4831ed12.qcow2.20140109T134445Z) | ||

| + | Now you should save the backup date and the disk image(s) in a variable | ||

| + | <source lang='bash'> | ||

| + | backupdate="<BACKUP-DATE>" # For example: backupdate="20140109T134445Z" | ||

| + | diskimage1="<DISK-IMAGE-1>.qcow2" # For example: diskimage1="8798561b-d5de-471b-a6fc-ec2b4831ed12.qcow2" | ||

| + | diskimage2="<DISK-IMAGE-2>.qcow2" # For example: diskimage2="aaaaaaaa-bbbb-cccc-dddd-eeeeeeeeeeee.qcow2" | ||

| + | ... | ||

| + | </source> | ||

| + | |||

| + | Have again a look at the different variables and '''double check them again''' | ||

| + | <source lang='bash'> | ||

| + | echo "Machine Name = ${machinename}" | ||

| + | echo "VM Pool = ${vmpool}" | ||

| + | echo "VM Type = ${vmtype}" | ||

| + | echo "Backup date = ${backupdate}" | ||

| + | echo "Disk Image 1 = ${diskimage1}" | ||

| + | echo "Disk Image 2 = ${diskimage2}" | ||

| + | ... | ||

| + | </source> | ||

| + | |||

| + | Copy all these files to the retain location: | ||

| + | <source lang='bash'> | ||

| + | currentdate=`date --utc +'%Y%m%dT%H%M%SZ'` | ||

| + | mkdir -p /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate} | ||

| + | cp -p /var/backup/virtualization/${vmtype}/${vmpool}/${machinename}/${backupdate}/${machinename}.ldif.${backupdate} /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate}/ | ||

| + | </source> | ||

| + | |||

| + | <!--Check if there is a difference between the current XML file and the one from the backup | ||

| + | <source lang='bash'> | ||

| + | diff -Naur /etc/libvirt/qemu/${machinename}.xml /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate}/${machinename}.xml.${backupdate} | ||

| + | </source> | ||

| + | and '''edit the file at the retain location''' according to your needs.--> | ||

| + | |||

| + | ''' Now you are entering the critical part. You won't be able to undo the following steps''' | ||

| + | |||

| + | Check if there is a difference between the current LDAP entry and the one from the backup | ||

| + | <source lang='bash'> | ||

| + | domain="<DOMAIN>" # For example domain="stoney-cloud.org" | ||

| + | ldapbase="<LDAPBASE>" # For expample ldapbase="dc=stoney-cloud,dc=org" | ||

| + | ldapsearch -H ldaps://ldapm.${domain} -b "sstVirtualMachine=${machinename},ou=virtual machines,ou=virtualization,ou=services,${ldapbase}" -s sub -x -LLL -o ldif-wrap=no -D "cn=Manager,${ldapbase}" -W "(objectclass=*)" > /tmp/${machinename}.ldif | ||

| + | diff -Naur /tmp/${machinename}.ldif /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate}/${machinename}.ldif.${backupdate} | ||

| + | </source> | ||

| + | and '''edit the file at the retain location''' according to your needs. | ||

| + | |||

| + | If there are no differences (or the differences are not important) you can skip the following step. Otherwise use the [https://cloud.stepping-stone.ch/phpldapadmin PhpLdapAdmin] to delete the machine from the LDAP directory (do not forget to delete the dhcp entry <code>dn: cn=<MACHINE-NAME>,ou=virtual machines,cn=192.168.140.0,cn=config-01,ou=dhcp,ou=networks,ou=virtualization,ou=services,dc=stoney-cloud,dc=org</code>). Then add the LDIF (the one you just edited) to the LDAP (first do some general replacement) | ||

| + | <source lang='bash'> | ||

| + | sed -i\ | ||

| + | -e 's/snapshotting/finished/'\ | ||

| + | -e '/member.*/d'\ | ||

| + | /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate}/${machinename}.ldif.${backupdate} | ||

| + | |||

| + | /usr/bin/ldapadd -H "ldaps://ldapm.${domain}" -x -D "cn=Manager,${ldapbase}" -W -f /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate}/${machinename}.ldif.${backupdate} | ||

| + | </source> | ||

| + | |||

| + | Undefine the machine | ||

| + | <source lang='bash'> | ||

| + | virsh undefine ${machinename} | ||

| + | </source> | ||

| + | |||

| + | Copy all the disk images from the backup location back to their original location | ||

| + | <source lang='bash'> | ||

| + | cp -p /var/backup/virtualization/${vmtype}/${vmpool}/${machinename}/${backupdate}/${diskimage1}.${backupdate} /var/virtualization/${vmtype}/${vmpool}/${diskimage1} | ||

| + | cp -p /var/backup/virtualization/${vmtype}/${vmpool}/${machinename}/${backupdate}/${diskimage2}.${backupdate} /var/virtualization/${vmtype}/${vmpool}/${diskimage2} | ||

| + | ... | ||

| + | </source> | ||

| + | |||

| + | And restore the domain from the state file from the backup location with the XML from the retain location (the one you might have edited) | ||

| + | <source lang='bash'> | ||

| + | virsh restore /var/backup/virtualization/${vmtype}/${vmpool}/${machinename}/${backupdate}/${machinename}.state.${backupdate} | ||

| + | </source> | ||

| + | |||

| + | Now the machine should be up and running again. Continuing where it was stopped when taking the backup. | ||

| + | |||

| + | If everything is OK, you can cleanup the created files and directories | ||

| + | <source lang='bash'> | ||

| + | rm -rf /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate} | ||

| + | rm /tmp/${machinename}.ldif | ||

| + | </source> | ||

| + | |||

== Next steps == | == Next steps == | ||

[[Category: stoney conductor]] | [[Category: stoney conductor]] | ||

Latest revision as of 15:43, 27 June 2014

Contents

- 1 Overview

- 2 Requirements

- 3 Backup

- 3.1 Basic idea

- 3.2 Communication through backend

- 3.2.1 Control-Instance Daemon Interaction for creating a Backup with LDIF Examples

- 3.2.1.1 Step 00: Backup Configuration for a virtual machine

- 3.2.1.2 Step 01: Initialize Backup Sub Tree (Control instance daemon)

- 3.2.1.3 Step 02: Finalize the Initialization (Control instance daemon)

- 3.2.1.4 Step 03: Start the Snapshot Process (Control instance daemon)

- 3.2.1.5 Step 04: Starting the Snapshot Process (Provisioning-Backup-KVM daemon)

- 3.2.1.6 Step 05: Finalizing the Snapshot Process (Provisioning-Backup-KVM daemon)

- 3.2.1.7 Step 06: Start the export Process (Control instance daemon)

- 3.2.1.8 Step 07: Starting the export Process (Provisioning-Backup-KVM daemon)

- 3.2.1.9 Step 08: Finalizing the export Process (Provisioning-Backup-KVM daemon)

- 3.2.1.10 Step 09: Start the commit Process (Control instance daemon)

- 3.2.1.11 Step 10: Starting the commit Process (Provisioning-Backup-KVM daemon)

- 3.2.1.12 Step 11: Finalizing the commit Process (Provisioning-Backup-KVM daemon)

- 3.2.1.13 Step 12: Finalizing the Backup Process (Control instance daemon)

- 3.2.1 Control-Instance Daemon Interaction for creating a Backup with LDIF Examples

- 3.3 Current Implementation (Backup)

- 3.4 Next steps

- 4 Restore

- 4.1 Basic idea

- 4.2 Communication through backend

- 4.2.1 Control instance Daemon Interaction for restoring a Backup with LDIF Examples

- 4.2.1.1 Step 01: Start the unretainSmallFiles process (Control instance daemon)

- 4.2.1.2 Step 02: Starting the unretainSmallFiles process (Provisioning-Backup-KVM daemon)

- 4.2.1.3 Step 03: Finalizing the unretainSmallFiles process (Provisioning-Backup-KVM daemon)

- 4.2.1.4 Step 05: Start the unretainLargeFiles process (Control instance daemon)

- 4.2.1.5 Step 06: Starting the unretainLargeFiles process (Provisioning-Backup-KVM daemon)

- 4.2.1.6 Step 07: Finalizing the unretainLargeFiles process (Provisioning-Backup-KVM daemon)

- 4.2.1.7 Step 09: Start the restore process (Control instance daemon)

- 4.2.1.8 Step 10: Starting the restore process (Provisioning-Backup-KVM daemon)

- 4.2.1.9 Step 11: Finalizing the restore process (Provisioning-Backup-KVM daemon)

- 4.2.1.10 Step 12: Finalizing the restore process (Control instance daemon)

- 4.2.1 Control instance Daemon Interaction for restoring a Backup with LDIF Examples

- 4.3 Current Implementation (Restore)

- 4.4 Next steps

Overview

This page describes how the VMs and VM-Templates are backed-up and restored inside the stoney cloud.

Requirements

- sstBackupRootDirectory: file:///var/backup/virtualization

- This directory might be a single partition which needs to have the same size as your partition for the live images (it's a "copy" of the live partition)

- sstBackupRetainDirectory: file:///var/virtualization/retain

- This directory must be on the same partition as your life images are

- A working stoney cloud, installed according to stoney cloud: Single-Node Installation or stoney cloud: Multi-Node Installation.

- The backup configuration must be set: stoney conductor: OpenLDAP directory data organisation.

Backup

Basic idea

The main idea to backup a VM or a VM-Template is, to divide the task into three subtasks:

- createSnapshot: Create a disk only snapshot. A new overlay file is created, all write operations are performed to this file. The underlying disk-image is now read only.

- exportSnapshot: Copy the read only disk-image to the backup location.

- commitSnapshot: Commit the performed write operations from the overlay back to the underlying (original) disk image. Now the underlying image is read-write again and the overlay image can be deleted.

A more detailed and technical description for these three sub-processes can be found here.

Furthermore there is an control instance, which can independently call these three sub-processes for a given machine. Like that, the stoney cloud is able to handle different cases:

Backup a single machine

The procedure for backing up a single machine is very simple. Just call the three sub-processes (create-, export- and commitSnapshot) one after the other. So the control instance would do some very basic stuff:

object machine = args[0]; if( createSsnapshot( machine ) ) { if ( exportSnapshot( machine ) ) { if ( commitSnapshot( machine ) ) { printf("Successfully backed up machine %s\n", machine); } else { printf("Error while committing snapshot for machine %s: %s\n", machine, error); } } else { printf("Error while exporting snapshot for machine %s: %s\n", machine, error); } } else { printf("Error while snapshotting machine %s: %s\n", machine, error); }

Backup multiple machines at the same time

When backing up multiple machines at the same time, we need to make sure that the snapshots for the machines are as close together as possible. Therefore the control instance should call first the createSnapshot process for all machines. After every machine has been snapshotted, the control instance can call the exportSnapshot and commitSnapshot process for every machine. The most important part here is, that the control instance somehow remembers, if the snapshot for a given machine was successful or not. Because if the snapshot failed, it must not call the exportSnapshot and commitSnapshot process. So the control instance needs a little bit more logic:

object machines[] = args[0]; object successful_snapshots[]; # Snapshot all machines for( int i = 0; i < sizeof(machines) / sizeof(object) ; i++ ) { # If the snapshot was successful, put the machine into the # successful_snapshots array if ( createSnapshot( machines[i] ) ) { successful_snapshots[machines[i]]; } else { printf("Error while snapshotting machine %s: %s\n", machines[i],error); } } # export and commit all successful_snapshot machines for ( int i = 0; i < sizeof(successful_snapshots) / sizeof(object) ; i++ ) ) { # Check if the element at this position is not null, then the snapshot # for this machine was successful if ( successful_snapshots[i] ) { if ( exportSnapshot( successful_snapshots[i] ) ) { if ( commitSnapshot( successful_snapshots[i] ) ) { printf("Successfully backed-up machine %s\n", successful_snapshots[i]); } else { printf("Error while committing snapshot for machine %s: %s\n", successful_snapshots[i],error); } } else { printf("Error while exporting snapshot for machine %s: %s\n", successful_snapshots[i],error); } } }

Sub-Processes

See also Libvirt_external_snapshot_with_GlusterFS

createSnapshot

For the commands see Libvirt_external_snapshot_with_GlusterFS#Part_2:_Create_the_snapshot_using_virsh

For the workflow see stoney_conductor:_prov-backup-kvm#createSnapshot

exportSnapshot

- Simply copy the underlying image to the backup location

-

cp -p /<path>/<to>/<image>.qcow2 /<path>/<to>/<backup>/<location>/.

-

For the workflow see stoney_conductor:_prov-backup-kvm#exportSnapshot

commitSnapshot

For the commands see Libvirt_external_snapshot_with_GlusterFS#Cleanup.2FCommit_.28Online.29

For the workflow see stoney_conductor:_prov-backup-kvm#commitSnapshot

Communication through backend

Since the stoney cloud is (as the name says already) a cloud solution, it makes sense to have a backend (in our case openLDAP) involved in the whole process. Like that it is possible to run the backup jobs decentralized on every vm-node. The control instance can then modify the backend, and theses changes are seen by the diffenrent backup daemons on the vm-nodes. So the communication could look like shown in the following picture (Figure 1):

You can modify/update this workflow by editing File:Daemon-communication.xmi (you may need Umbrello UML Modeller diagram programme for KDE to display the content properly).

Control-Instance Daemon Interaction for creating a Backup with LDIF Examples

The step numbers correspond with the graphical overview from above.

Step 00: Backup Configuration for a virtual machine

# The following backup configuration says, that the backup should be done daily, at 03:00 hours (localtime). # * * * * * command to be executed # - - - - - # | | | | | # | | | | +----- day of week (0 - 6) (Sunday=0) # | | | +------- month (1 - 12) # | | +--------- day of month (1 - 31) # | +----------- hour (0 - 23) # +------------- min (0 - 59) # localtime in the crontab entry dn: ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch objectclass: top objectclass: organizationalUnit objectclass: sstVirtualizationBackupObjectClass objectclass: sstCronObjectClass ou: backup description: This sub tree contains the backup plan for the virtual machine kvm-005. sstCronMinute: 0 sstCronHour: 3 sstCronDay: * sstCronMonth: * sstCronDayOfWeek: * sstCronActive: TRUE sstBackupRootDirectory: file:///var/backup/virtualization sstBackupRetainDirectory: file:///var/virtualization/retain sstBackupRamDiskLocation: file:///mnt/ramdisk-test sstVirtualizationDiskImageFormat: qcow2 sstVirtualizationDiskImageOwner: root sstVirtualizationDiskImageGroup: vm-storage sstVirtualizationDiskImagePermission: 0660 sstBackupNumberOfIterations: 1 sstVirtualizationVirtualMachineForceStart: FALSE sstVirtualizationBandwidthMerge: 0

Step 01: Initialize Backup Sub Tree (Control instance daemon)

The sub tree ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch reflects the time, when the backup is planned (in the form of [YYYY][MM][DD]T[hh][mm][ss]Z (ISO 8601) and it should be written at the time, when the backup is planned and should be executed. The section 20121002T010000Z means the following:

- Year: 2012

- Month: 10

- Day of Month: 02

- Hour of Day: 01

- Minutes: 00

- Seconds: 00

Please be aware the the time is to be written in UTC (see also the comment in the LDIF example below).

# This entry is the place holder for the backup, which is to be executed at 03:00 hours (localtime with daylight-saving). This # leads to the 20121002T010000Z timestamp (which is written in UTC). dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch objectclass: top objectclass: sstProvisioning objectclass: organizationalUnit ou: 20121002T010000Z sstProvisioningExecutionDate: 0 sstProvisioningMode: initialize sstProvisioningReturnValue: 0 sstProvisioningState: 20121002T014513Z

Step 02: Finalize the Initialization (Control instance daemon)

# The attribute sstProvisioningState is updated with current time by the fc-brokerd, when sstProvisioningMode is modified. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 20121002T010001Z - replace: sstProvisioningMode sstProvisioningMode: initialized

Step 03: Start the Snapshot Process (Control instance daemon)

With the setting of the sstProvisioningMode to snapshot, the actual backup process is kicked off by the Control instance daemon.

# The attribute sstProvisioningState is set to zero by the fc-brokerd, when sstProvisioningMode is modified to # snapshot (this way the Provisioning-Backup-VKM daemon knows, that it must start the snapshotting process). dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 0 - replace: sstProvisioningMode sstProvisioningMode: snapshot

Step 04: Starting the Snapshot Process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon receives the snapshot command, it sets the sstProvisioningMode to snapshotting to tell the Control instance daemon and other interested parties, that it is snapshotting the virtual machine or virtual machine template.

# The attribute sstProvisioningMode is set to snapshotting by the Provisioning-Backup-VKM daemon. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningMode sstProvisioningMode: snapshotting

Step 05: Finalizing the Snapshot Process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon has executed the snapshot command, it sets the sstProvisioningMode to snapshotted, the sstProvisioningState to the current timestamp (UTC) and sstProvisioningReturnValue to zero to tell the Control instance daemon and other interested parties, that the snapshot of the virtual machine or virtual machine template is finished.

# The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when # the attributes sstProvisioningReturnValue and sstProvisioningMode are set. # With this combination, the fc-brokerd knows, that it can proceed. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 20121002T010011Z - replace: sstProvisioningReturnValue sstProvisioningReturnValue: 0 - replace: sstProvisioningMode sstProvisioningMode: snapshotted

Step 06: Start the export Process (Control instance daemon)

With the setting of the sstProvisioningMode to export, the Control instance daemon tells the Provisioning-Backup-KVM daemon to export the disk image to the backup location.

# The attribute sstProvisioningState is set to zero by the fc-brokerd, when sstProvisioningMode is modified to # export (this way the Provisioning-Backup-VKM daemon knows, that it must start the export process). dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 0 - replace: sstProvisioningMode sstProvisioningMode: export

Step 07: Starting the export Process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon receives the export command, it sets the sstProvisioningMode to exporting to tell the Control instance daemon and other interested parties, that it is exporting the virtual machine or virtual machine template disk images.

# The attribute sstProvisioningMode is set to exporting by the Provisioning-Backup-VKM daemon. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningMode sstProvisioningMode: exporting

Step 08: Finalizing the export Process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon has executed the export command, it sets the sstProvisioningMode to exported, the sstProvisioningState to the current timestamp (UTC) and sstProvisioningReturnValue to zero to tell the Control instance daemon and other interested parties, that the export of the virtual machine or virtual machine template disk-images is finished.

# The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when # the attributes sstProvisioningReturnValue and sstProvisioningMode are set. # With this combination, the fc-brokerd knows, that it can proceed. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 20121002T010500Z - replace: sstProvisioningReturnValue sstProvisioningReturnValue: 0 - replace: sstProvisioningMode sstProvisioningMode: exported

Step 09: Start the commit Process (Control instance daemon)

With the setting of the sstProvisioningMode to commit, the Control instance daemon tells the Provisioning-Backup-KVM daemon to commit the changes from the overlay file to the underlying disk-image

# The attribute sstProvisioningState is set to zero by the fc-brokerd, when sstProvisioningMode is modified to # commit (this way the Provisioning-Backup-VKM daemon knows, that it must start the commit process). dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 0 - replace: sstProvisioningMode sstProvisioningMode: commit

Step 10: Starting the commit Process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon receives the commit command, it sets the sstProvisioningMode to comitting to tell the Control instance daemon and other interested parties, that it is committing changes from the overlay disk-images back to the underlying ones.

# The attribute sstProvisioningMode is set to comitting by the Provisioning-Backup-VKM daemon. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningMode sstProvisioningMode: committing

Step 11: Finalizing the commit Process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon has executed the commit command, it sets the sstProvisioningMode to comitted, the sstProvisioningState to the current timestamp (UTC) and sstProvisioningReturnValue to zero to tell the Control instance daemon and other interested parties, that the comitting of the changes from the overlay disk-images back to the underlying ones is done.

# The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when # the attributes sstProvisioningReturnValue and sstProvisioningMode are set. # With this combination, the fc-brokerd knows, that it can proceed. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 20121002T012000Z - replace: sstProvisioningReturnValue sstProvisioningReturnValue: 0 - replace: sstProvisioningMode sstProvisioningMode: comitted

Step 12: Finalizing the Backup Process (Control instance daemon)

As soon as the Control instance daemon notices, that the attribute sstProvisioningMode ist set to committed, it sets the sstProvisioningMode to finished and the sstProvisioningState to the current timestamp (UTC). All interested parties now know, that the backup process is finished, there for a new backup process could be started.

# The attribute sstProvisioningState is updated with current time by the fc-brokerd, when sstProvisioningMode is # set to finished. # All interested parties now know, that the backup process is finished, there for a new backup process could be started. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 20121002T012001Z - replace: sstProvisioningMode sstProvisioningMode: finished

Current Implementation (Backup)

Since we do not have a working control instance, we need to have a workaround for backing up the machines:

- We do already have a BackupKVMWrapper.pl script (File-Backend) which executes the three sub-processes in the correct order for a given list of machines (see #Backup multiple machines at the same_time).

- We do already have the implementation for the whole backup with the LDAP-Backend (see stoney conductor: prov backup kvm ).

- We can now combine these two existing scripts and create a wrapper (lets call it LDAPKVMWrapper) which, in some way, adds some logic to the BackupKVMWrapper.pl. In fact the LDAPKVMWrapper wrapper will generate the list of machines which need a backup.

The behaviour on our servers is as follows (c.f. Figure 2):

- The (decentralized) LDAPKVMWrapper wrapper (which is executed everyday via cronjob) generates a list off all machines running on the current host.

- Currently on the hosts the cronjobs looks like:

00 01 * * * /usr/bin/LDAPKVMWrapper.pl | logger -t Backup-KVM - For each of these machines:

- Check if the machine is excluded from the backup, if yes, remove the machine from the list

- Check if the last backup was successful, if not, remove the machine from the list

- Currently on the hosts the cronjobs looks like:

- Update the backup subtree for each machine in the list

- Remove the old backup leaf (the "yesterday-leaf"), and add a new one (the "today-leaf")

- After this step, the machines are ready to be backed up

- Call the KVMBackupWrapper.pl script with the machines list as a parameter

- Wait for the KVMBackupWrapper.pl script to finish

- Go again through all machines and update the backup subtree a last time

- Check if the backup was successful, if yes, set sstProvisioningMode = finished (see also TBD)

You can modify/update this workflow by editing File:wrapper-interaction.xmi (you may need Umbrello UML Modeller diagram programme for KDE to display the content properly).

- If for some reason something does not work at all, the whole backup process can be deactivated by simply disabling the LDAPKVMWrapper cronjob

-

crontab -e - Comment the LDAPKVMWrapper cronjob line:

#00 01 * * * /usr/bin/LDAPKVMWrapper.pl | logger -t Backup-KVM

-

How to exclude a machine from the backup

Login to one of the vm-nodes and execute the following command

If you want to exclude a machine from the backup run you simply need to add the following entry to your LDAP directory:

machineuuid="<UUID OF THE MACHINE-NAME>" # e.g.: b9d13dbc-9ab7-4948-9daa-a5709de83dc2 cat << EOF | ldapadd -D cn=Manager,o=stepping-stone,c=ch -H ldaps://ldapm.stepping-stone.ch/ -W -x dn: ou=backup,sstVirtualMachine=${machineuuid},ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch objectclass: top objectclass: organizationalUnit objectclass: sstVirtualizationBackupObjectClass ou: backup sstbackupexcludefrombackup: TRUE EOF

If the backup subtree in the LDAP directory already exists, you need to add the sstbackupexcludefrombackup attribute:

machineuuid="<UUID OF THE MACHINE-NAME>" # e.g.: b9d13dbc-9ab7-4948-9daa-a5709de83dc2 cat << EOF | ldapadd -D cn=Manager,o=stepping-stone,c=ch -H ldaps://ldapm.stepping-stone.ch/ -W -x dn: ou=backup,sstVirtualMachine=${machineuuid},ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify add: objectClass objectClass: sstVirtualizationBackupObjectClass - add: sstbackupexcludefrombackup sstbackupexcludefrombackup: TRUE EOF

Re-include the machine to the backup

If you want to re include a machine, simply delete the machines whole backup subtree. It will be recreated during the next backup run.

Next steps

Restore

Attention: The restore process is not yet defined / nor implemented. The following documentation is about the old restore process.

Basic idea

The restore process, similar to the backup process, can be divided into three sub-processes:

- Unretain the small files: Copy the small files (backend entry, XML description) from the backup directory to the retain directory

- Unretain the big files: Copy the big files (state file, disk image(s)) form the backup directory to the retain directory

- Restore the machine: Replace the live disk image(s) by the one(s) from the backup and restore the machine from the state file

Additionally the restore process can also be divided into two phases:

- User-Interaction phase: After the "unretain small files" the user needs to decide two things:

- On conflicts between the backend entry file and the XML description, the user need to decide how to resolve this conflict(s)

- The user can also abort the restore process up to this point. After that the restore can not be aborted or undone!

- Non-User-Interaction phase: The daemons communicate through the backend between each other and the restore process continues without further user input (c.f. Communication through backend)

Sub Processes

Unretain small files

This workflow assumes that the backup directory is on the same physical server as the retain directory (protocol is file://)

- Copy the backend-entry file from the backup directory to the retain directory:

-

cp -p /path/to/backup/vm-001.backend /path/to/retain/vm-001.backend

-

- Copy the XML description from the from the backup directory to the retain directory:

-

cp -p /path/to/backup/vm-001.xml /path/to/retain/vm-001.xml

-

- Compare the backend-entry file (the one in the retain directory) with the live-backend entry

- Resolve all conflicts between these two backend entries

- Modify the backend entry at the retain location accordingly

- Resolve all conflicts between these two backend entries

- Apply the same changes for the XML description at the retain location (backend entry and XML description need to be consistent).

Unretain large files

- Copy the state file from the backup directory to the retain directory:

-

cp -p /path/to/backup/vm-001.state /path/to/retain/vm-001.state

-

- Copy the disk image(s) from the backup directory to the retain directory:

-

cp -p /path/to/backup/vm-001.qcow2 /path/to/retain/vm-001.qcow2

- Important: If a VM has more than just one disk image, repeat this step for every disk image

-

Restore the VM

- Shutdown the VM if it is running:

-

virsh shutdown vm-001

-

- Undefine the VM if it is still defined:

-

virsh undefine vm-001

-

- Overwrite the original disk image:

-

mv /path/to/retain/vm-001.qcow2 /path/to/images/vm-001.qcow2

- Important: If a VM has more than just one disk image, repeat this step for every disk image

-

- Restore the VMs backend entry:

- Write the backend entry from the retain location (

/path/to/retain/vm-001.backend) to the backend

- Write the backend entry from the retain location (

- Overwrite the VMs XML description with the one from the retain location

-

cp -p /path/to/retain/vm-001.xml /path/to/xmls/vm-001.xml

-

- Restore the VM from the state file with the corrected XML

-

virsh restore /path/to/retain/vm-001.state --xml /path/to/xmls/vm-001.xml

-

Communication through backend

The actual KVM-Restore process is controlled completely by the Control instance daemon via the OpenLDAP directory. See OpenLDAP Directory Integration the involved attributes and possible values.

You can modify/update these interactions by editing File:Restore-Interaction.xmi (you may need Umbrello UML Modeller diagram programme for KDE to display the content properly).

Control instance Daemon Interaction for restoring a Backup with LDIF Examples

Step 01: Start the unretainSmallFiles process (Control instance daemon)

The first step of the restore process is to copy the small files (in this case the XML file and the LDIF) from the configured backup location to the configured retain location.

# The attribute sstProvisioningState is set to zero by the Control instance daemon, when sstProvisioningMode is modified to # unretainSmallFiles (this way the Provisioning-Backup-VKM daemon knows, that it must start the unretainSmallFiles process). dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 0 - replace: sstProvisioningMode sstProvisioningMode: unretainSmallFiles

Step 02: Starting the unretainSmallFiles process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon receives the command to unretain the small files, it sets the sstProvisioningMode to unretainingSmallFiles to tell the Control instance daemon and other interested parties, that it is unretaining the small files for the virtual machine or virtual machine template.

# The attribute sstProvisioningMode is set to unretainingSmallFiles by the Provisioning-Backup-VKM daemon. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningMode sstProvisioningMode: unretainingSmallFiles

Step 03: Finalizing the unretainSmallFiles process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon has executed the commands to unretain the small files, it sets the sstProvisioningMode to unretainedSmallFiles, the sstProvisioningState to the current timestamp (UTC) and sstProvisioningReturnValue to zero to tell the Control instance daemon and other interested parties, that the unretaining of all the small files from the configured backup location is finished.

# The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when # the attributes sstProvisioningReturnValue and sstProvisioningMode are set. # With this combination, the Control instance daemon knows, that it can proceed. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 20121002T012000Z - replace: sstProvisioningReturnValue sstProvisioningReturnValue: 0 - replace: sstProvisioningMode sstProvisioningMode: unretainedSmallFiles

Step 05: Start the unretainLargeFiles process (Control instance daemon)

Next step in the restore process is to copy the large files (state file and disk images) from the configured backup directory to the configured retain directory.

# The attribute sstProvisioningState is set to zero by the Control instance daemon, when sstProvisioningMode is modified to # unretainLargeFiles (this way the Provisioning-Backup-VKM daemon knows, that it must start the unretainLargeFiles process). dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 0 - replace: sstProvisioningMode sstProvisioningMode: unretainLargeFiles

Step 06: Starting the unretainLargeFiles process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon receives the command to unretain the large files, it sets the sstProvisioningMode to unretainingLargeFiles to tell the Control instance daemon and other interested parties, that it is unretaining the large files for the virtual machine or virtual machine template.

In the meantime the vm-manager merges the LDIF we have unretained in step 02 with the one in the live directory to sort out possible differences in the configuration of the virtual machine.

# The attribute sstProvisioningMode is set to unretainingSmallFiles by the Provisioning-Backup-VKM daemon. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningMode sstProvisioningMode: unretainingLargeFiles

Step 07: Finalizing the unretainLargeFiles process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon has executed the commands to unretain the large files, it sets the sstProvisioningMode to unretainedLargeFiles, the sstProvisioningState to the current timestamp (UTC) and sstProvisioningReturnValue to zero to tell the Control instance daemon and other interested parties, that the unretaining of all the large files from the configured backup location is finished.

# The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when # the attributes sstProvisioningReturnValue and sstProvisioningMode are set. # With this combination, the Control instance daemon knows, that it can proceed. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 20121002T012000Z - replace: sstProvisioningReturnValue sstProvisioningReturnValue: 0 - replace: sstProvisioningMode sstProvisioningMode: unretainedLargeFiles

Step 09: Start the restore process (Control instance daemon)

Since we now have all necessary files in the configured retain location, the restore process can be started. There we simply copy the disk images back to their original location and restore the VM from the state file (which is also at the configured retain location)

# The attribute sstProvisioningState is set to zero by the Control instance daemon, when sstProvisioningMode is modified to # restore (this way the Provisioning-Backup-VKM daemon knows, that it must start the restore process). dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 0 - replace: sstProvisioningMode sstProvisioningMode: restore

Step 10: Starting the restore process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon receives the restore command, it sets the sstProvisioningMode to restoring to tell the Control instance daemon and other interested parties, that it is restoring the virtual machine or virtual machine template.

# The attribute sstProvisioningMode is set to restoring by the Provisioning-Backup-VKM daemon. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningMode sstProvisioningMode: restoring

Step 11: Finalizing the restore process (Provisioning-Backup-KVM daemon)

As soon as the Provisioning-Backup-KVM daemon has executed the restore command, it sets the sstProvisioningMode to restored, the sstProvisioningState to the current timestamp (UTC) and sstProvisioningReturnValue to zero to tell the Control instance daemon and other interested parties, that the restore process is finished.

# The attribute sstProvisioningState is set with the current timestamp by the Provisioning-Backup-VKM daemon, when # the attributes sstProvisioningReturnValue and sstProvisioningMode are set. # With this combination, the Control instance daemon knows, that it can proceed. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 20121002T012000Z - replace: sstProvisioningReturnValue sstProvisioningReturnValue: 0 - replace: sstProvisioningMode sstProvisioningMode: restored

Step 12: Finalizing the restore process (Control instance daemon)

As soon as the Control instance daemon notices, that the attribute sstProvisioningMode ist set to restored, it sets the sstProvisioningMode to finished and the sstProvisioningState to the current timestamp (UTC). All interested parties now know, that the restore process is finished.

# The attribute sstProvisioningState is updated with current time by the Control instance daemon, when sstProvisioningMode is # set to finished. # All interested parties now know, that the restore process is finished. dn: ou=20121002T010000Z,ou=backup,sstVirtualMachine=kvm-005,ou=virtual machines,ou=virtualization,ou=services,o=stepping-stone,c=ch changetype: modify replace: sstProvisioningState sstProvisioningState: 20121002T012001Z - replace: sstProvisioningMode sstProvisioningMode: finished

Current Implementation (Restore)

Attention: The restore process is not yet defined / nor implemented. The following documentation is about the old restore process.

- Since the prov-backup-kvm daemon is not running on the vm-nodes (c.f. stoney_conductor:_Backup#Current_Implementation_.28Backup.29), the restore process does not work when clicking the icon in the webinterface.

How to manually restore a machine from backup

Important: Before you continue with this guide, make sure that you have no other possibility to restore the machine. It might be easier and safer to get lost files from the online backup if the machine has one set up.

If you really have to restore the machine from the backup:

- Stop the machine from via the web interface

- Login (as root) on the VM-Node the machine was running on

As a first step, you would like to set some useful bash variables to be able to copy paste the following guide:

Double check all variables you are setting here. If one is not correct, you will restore a running machine or overwrite a live-disk image!

machinename="<MACHINE-NAME>" # For example: machinename="b6dc3d27-5981-4b18-8f3f-31ed3d21a3c6" vmpool="<VM-POOL>" # For example vmpool="0f83f084-8080-413e-b558-b678e504836e" vmtype="<VM-TYPE>" # For example vmtype="vm-persistent"

Change to the backup directory for the given machine and check the iterations:

cd /var/backup/virtualization/${vmtype}/${vmpool}/${machinename} ls -al

Change into the most recent iteration

cd 2014... ls -al

In there you should have:

- The state file <MACHINE-NAME>.state.<BACKUP-DATE> (for example b6dc3d27-5981-4b18-8f3f-31ed3d21a3c6.state.20140109T134445Z)

- The XML description <MACHINE-NAME>.xml.<BACKUP-DATE> (for example b6dc3d27-5981-4b18-8f3f-31ed3d21a3c6.xml.20140109T134445Z)

- The ldif file <MACHINE-NAME>.ldif.<BACKUP-DATE> (for example b6dc3d27-5981-4b18-8f3f-31ed3d21a3c6.ldif.20140109T134445Z)

- And at least one disk image <DISK-IMAGE>.qcow2.<BACKUP-DATE> (for example 8798561b-d5de-471b-a6fc-ec2b4831ed12.qcow2.20140109T134445Z)

Now you should save the backup date and the disk image(s) in a variable

backupdate="<BACKUP-DATE>" # For example: backupdate="20140109T134445Z" diskimage1="<DISK-IMAGE-1>.qcow2" # For example: diskimage1="8798561b-d5de-471b-a6fc-ec2b4831ed12.qcow2" diskimage2="<DISK-IMAGE-2>.qcow2" # For example: diskimage2="aaaaaaaa-bbbb-cccc-dddd-eeeeeeeeeeee.qcow2" ...

Have again a look at the different variables and double check them again

echo "Machine Name = ${machinename}" echo "VM Pool = ${vmpool}" echo "VM Type = ${vmtype}" echo "Backup date = ${backupdate}" echo "Disk Image 1 = ${diskimage1}" echo "Disk Image 2 = ${diskimage2}" ...

Copy all these files to the retain location:

currentdate=`date --utc +'%Y%m%dT%H%M%SZ'` mkdir -p /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate} cp -p /var/backup/virtualization/${vmtype}/${vmpool}/${machinename}/${backupdate}/${machinename}.ldif.${backupdate} /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate}/

Now you are entering the critical part. You won't be able to undo the following steps

Check if there is a difference between the current LDAP entry and the one from the backup

domain="<DOMAIN>" # For example domain="stoney-cloud.org" ldapbase="<LDAPBASE>" # For expample ldapbase="dc=stoney-cloud,dc=org" ldapsearch -H ldaps://ldapm.${domain} -b "sstVirtualMachine=${machinename},ou=virtual machines,ou=virtualization,ou=services,${ldapbase}" -s sub -x -LLL -o ldif-wrap=no -D "cn=Manager,${ldapbase}" -W "(objectclass=*)" > /tmp/${machinename}.ldif diff -Naur /tmp/${machinename}.ldif /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate}/${machinename}.ldif.${backupdate}

and edit the file at the retain location according to your needs.

If there are no differences (or the differences are not important) you can skip the following step. Otherwise use the PhpLdapAdmin to delete the machine from the LDAP directory (do not forget to delete the dhcp entry dn: cn=<MACHINE-NAME>,ou=virtual machines,cn=192.168.140.0,cn=config-01,ou=dhcp,ou=networks,ou=virtualization,ou=services,dc=stoney-cloud,dc=org). Then add the LDIF (the one you just edited) to the LDAP (first do some general replacement)

sed -i\ -e 's/snapshotting/finished/'\ -e '/member.*/d'\ /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate}/${machinename}.ldif.${backupdate} /usr/bin/ldapadd -H "ldaps://ldapm.${domain}" -x -D "cn=Manager,${ldapbase}" -W -f /var/virtualization/retain/${vmtype}/${vmpool}/${machinename}/${currentdate}/${machinename}.ldif.${backupdate}

Undefine the machine

virsh undefine ${machinename}Copy all the disk images from the backup location back to their original location